How observability helps with reliability

What observability means, how to set service level objectives (SLOs), how observability helps make systems more reliable, and how to validate your observability practice.

To improve the reliability of distributed systems, we need to understand how they’re behaving. This doesn’t just mean collecting metrics, but having the ability to answer specific questions about how a system is operating and whether it’s at risk of failing. This is even more important for the large, complex, distributed systems that we build and maintain today. Observability helps us answer these questions.

In this section, we’ll explain what observability is, how it helps solve complex questions about our environments, and how it contributes towards improving the reliability of our systems and processes.

What is observability?

Observability is a measure of a system’s internal behaviors based on its outputs. Observability data is collected from systems, aggregated, and processed in order to help engineers understand how their systems are performing. Engineers use this data to gain a holistic view of their systems, troubleshoot incidents, monitor for problems, and make operational decisions.

Observability data is often categorized into three pillars: logs, metrics, and traces. Logs record discrete events that happen within a system. Metrics record measurements about various components within a system, as uptime, error rate, service latency, request throughput, or resource usage. Traces record data about transactions moving between system components, such as a user request passing from a frontend web service to a backend database. This data is exposed and collected from systems through a process called instrumentation.

Observability enables engineers to answer any question about the state of their systems. This is important for reliability, since we need to understand how our systems are operating if we want to improve them.

You can’t predict what information you’re going to need to know to answer a question you also couldn’t predict. So you should gather absolutely as much context as possible, all the time.

<span class="quote-author-heading">Charity Majors</span>

<span class="quote-author-role">CTO, HONEYCOMB</span>

Is observability a requirement for reliability?

The short answer is: you need at least some visibility into your systems before starting a reliability initiative, but you don’t need a fully mature observability practice.

The more detailed answer is: observability plays a key role in helping us:

- Understand how complex distributed systems work.

- Measure the state of our systems in a clear, objective, and meaningful way.

- Establish baselines and targets for reliability and performance.

- Raise alerts and notify SREs if our systems enter an undesirable state.

This creates a foundation on which we can build reliability initiatives. Being able to objectively measure complex system behaviors means operations teams and site reliability engineering (SRE) teams can:

- Detect and pinpoint failure modes, or the causes of failure in a system.

- Access useful debugging information in near real-time, including events leading up to the failure.

- Set concrete reliability goals in the form of key performance indicators (KPIs) and track progress towards achieving those goals.

- Measure the value of a reliability initiative using metrics such as increased availability, reduced mean time to detection (MTTD), reduced mean time to resolution (MTTR), and fewer bugs shipped to production.

Observability data also plays an important business role. Reliability is both a financial and time investment, and engineering teams need to justify the cost of a reliability initiative by demonstrating clear benefits. Without this, organizations are less likely to prioritize and follow through with these initiatives.

You don't just want [your measurement] to be a system metric. You want to tie it back to your customer experience and/or revenue. Depending on your business, you can correlate these to revenue like Amazon did, [for example,] 'improve response time by this, increase revenue by that'.

<span class="quote-author-heading">Marco Coulter</span>

<span class="quote-author-role">TECHNICAL EVANGELIST, APPDYNAMICS</span>

How does observability help improve reliability?

Making our systems observable doesn’t automatically make them more reliable, but it does give us the necessary insights to improve their reliability. These inform our decisions about how to address failure modes, and help measure the impact that our efforts have on availability, uptime, and performance. This includes:

- Measuring the cost of downtime and showing how a reduction in incidents corresponds to increased revenue.

- Tracking improvements to system availability, performance, and stability throughout a reliability initiative.

- Tracking the amount of time that engineering teams spend troubleshooting, debugging, and fixing systems, and the associated costs. This includes the missed opportunity costs of diverting engineering resources away from revenue-generating activities, like building new products and features.

Next, let’s look at how we can use observability data to start improving reliability.

Define your priorities and objectives

When starting an observability practice, the temptation is to collect as much data as possible. This can quickly lead to information overload and flood your SREs with irrelevant dashboards and alerts. Many teams use the four golden signals (latency, traffic, errors, and saturation) popularized by Google, but the problem with this is that every team has different requirements and expectations for how their systems should operate.

The way to use observability effectively is to focus on what’s important to your organization. Your most important metrics are those that capture your customer experience. For example, if you’re an online retailer, the most important qualities of your systems might be:

- High availability of critical services in your application, such as your website frontend, checkout service, or payment processor. If any of these services fails, the entire application is at risk.

- High scalability, especially during periods of peak traffic such as Black Friday and Cyber Monday. The last thing customers want to see during a shopping event is an error page or loading screen.

- Low response time for user-facing services, such as your frontend. The lower the response time, the more performant the service is and the more satisfied customers are.

Set service levels

After identifying the most important metrics, we need to set acceptable thresholds. For example, because our website is a customer-facing service that provides critical functionality, we want to have a very high level of availability. Our customers also expect a high level of availability: if our website is unavailable or slow, they’ll likely go to a competitor. In order to build trust with our customers, we need to set expectations for the level of service they can expect when using our services.

This is commonly done using service level agreements (SLAs), which are contracts between a service provider and an end user promising a minimum quality of service, usually in the form of availability or uptime. If a service fails to meet its SLA, its users can be entitled to discounts or reimbursements, creating a financial incentive for improving reliability.

To create an SLA, organizations first determine the experience they want to provide for their customers, then identify the metrics that accurately reflect that experience. This is usually a joint effort between the Product and Engineering teams: Product defines the expected level of service, and Engineering identifies the metrics to measure and their acceptable ranges. These metrics are service level indicators (SLIs), and the acceptable ranges are service level objectives (SLO).

In distributed systems, SLAs are often expressed as “nines” of availability measured over a period of time. For example, two nines (99%) means a system can’t be unavailable for more than 3.65 days per year. Three nines (99.9%) leaves only 8.77 hours of downtime per year. Forward-looking companies strive for high availability (HA), which means four nines (99.99%, or 52.6 minutes of downtime per year) or higher. This might seem like a high bar, but consider that for an online retailer, a second of downtime can mean thousands of dollars in lost sales.

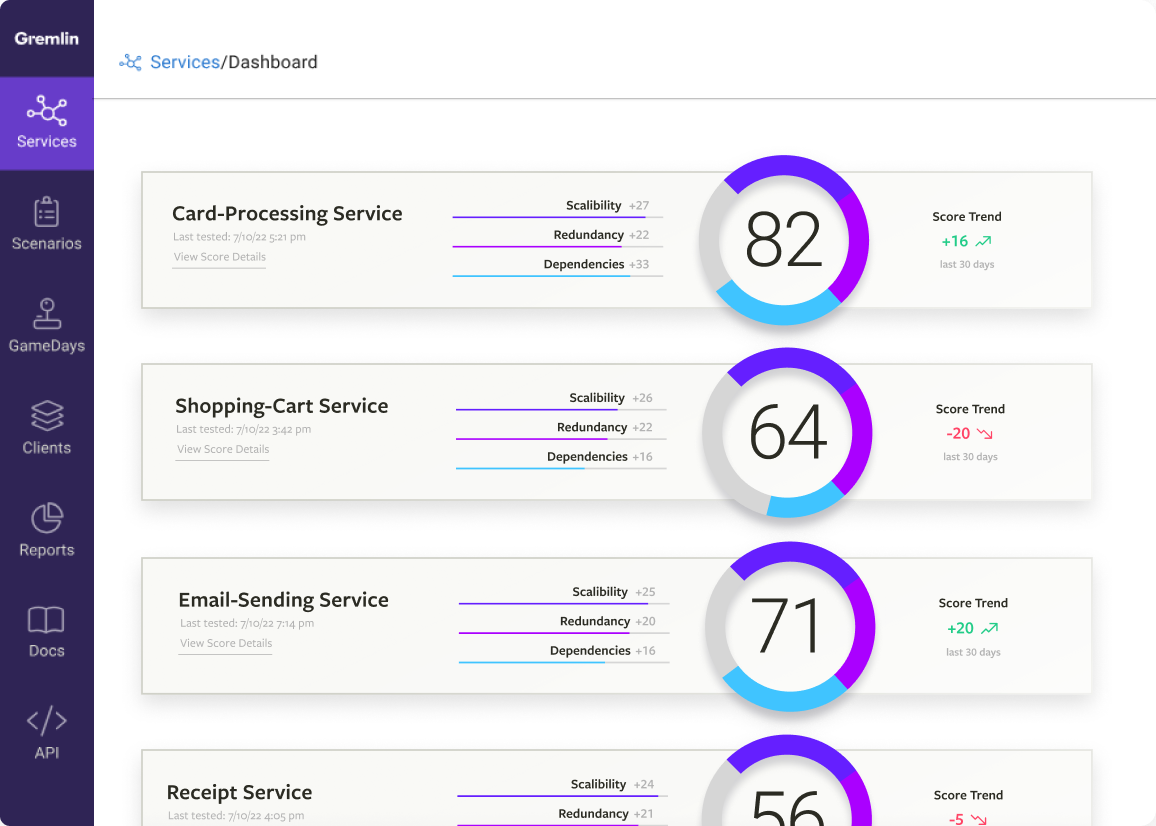

Validate adherence to your service levels

With a target service level established, we should continuously validate that our systems are adhering to our targets. We start by instrumenting our systems for the logs, metrics, and traces we determined are necessary to our business objectives. Tools like Amazon CloudWatch, Grafana, Prometheus, Datadog, and New Relic help collect and consolidate this data into a system that our SREs can easily use to monitor system behavior. We can create dashboards that not only show how our systems are performing, but how well they’re adhering to our SLOs. If they’re at risk of falling outside, monitoring and automated alerting will notify our SREs and incident response teams. Automating as much as possible ensures that our teams are quickly notified in case of problems.

Now that we have insight into our systems and can detect when something goes wrong, we can start focusing on where we can improve. We start by looking for areas where we’re not meeting (or just barely making) our SLOs, and consider how we can engineer our systems to address this risk. After deploying a fix, continuing to instrument and monitor our systems ensures that the fix was addressed. Using this feedback loop lets us continue improving our systems until we achieve our targets, and automation helps us maintain that level of adherence.

{{customlightbox}}

Validate your observability and reliability practices using Chaos Engineering

Just having an observability practice in place doesn’t mean we’re in the clear. We need to validate that we’re tracking the right metrics, that our dashboards are reporting relevant information, and that our alerts are guaranteed to notify the right people at the right time.

While we could wait for a production incident to occur, this is a reactive approach and exposes us to risk. Instead, what if we could proactively test our observability practice by simulating production conditions? For example, if application responsiveness is one of our SLOs, we should make sure our monitoring tool can detect changes in latency and response time. But how do we do this without putting our systems at risk? This is where Chaos Engineering helps.

Chaos Engineering is the practice of deliberately injecting failure into a system, observing how the system responds, and using our observations to improve its reliability. The key word here is observe: without visibility, we can’t accurately determine how failures affect our systems, our SLOs, and the customer experience. Observability helps us measure the impact of failures—and the impact of our fixes—in a meaningful and objective way.

Pairing Chaos Engineering with observability has many benefits, including:

- Helping establish an incident management program. This includes defining incident severity (SEV) levels, tracking mean time to detection (MTTD) and mean time to resolution (MTTR), addressing and mitigating failure modes, and preparing teams to respond to incidents. Read our tutorial on how to establish a high severity incident management program.

- Helping teams achieve their next nine of reliability by finding gaps in monitoring, simulating potential failures, and helping thoroughly test changes to systems before deploying to production. See our blog on incremental reliability improvement.

- Using observability data to directly guide the design of chaos experiments so that we can more thoroughly test our systems. See our tutorial on using observability to automatically generate chaos experiments.

To summarize...

Additional resources

WATCH

Using observability to generate chaos experiments in Gremlin

WATCH

Modern monitoring and observability with thoughtful chaos experiments by Datadog and Gremlin

WATCH

Mastering Chaos Engineering experiments with Gremlin and Dynatrace

READ

Chaos Engineering monitoring & metrics guide

READ

The KPIs of improved reliability

READ

Defining dashboard metrics

READ

Chaos Engineering with Gremlin and New Relic Infrastructure

Building reliable distributed systems

What reliability means for distributed systems, the challenges in building reliable systems, and how to overcome these challenges using Chaos Engineering.

All applications—no matter their function, complexity, or programming language—need reliable systems to run on. Without reliable systems, we would not have the large-scale, networked computing infrastructure we have today. But what does reliability really mean in terms of technology and systems?

Avoid downtime. Use Gremlin to turn failure into resilience.

Gremlin empowers you to proactively root out failure before it causes downtime. See how you can harness chaos to build resilient systems by requesting a demo of Gremlin.GET STARTED